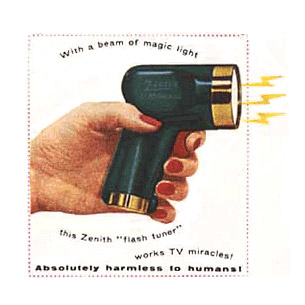

Zenith Flash-Matic TV Remote (1955)

Thank the Flash-Matic, the first wireless TV remote, which used flashing lights to turn the set on and off, control volume, and cycle between channels.

Introduced in 1955, it was shortly followed by the Zenith Space Command, which used ultrasonic waves to channel-surf and dominated the lives of couch potatoes until infrared remotes took over in the early 1980s.

Before the remote, TV viewers were likely to pick one channel (of the three available) and watch until the test pattern came on. TV remotes arguably helped pressure broadcasters to produce better shows and more channels (though somehow we still ended up with “Gilligan’s Island” and “The Biggest Loser”). They also changed what we expect from our devices.

Now you can use an iPhone app to control not only your TV, but your DVR, computer, home audio gear, the lights in your house, burglar alarms, and certain models of cars. You may never have to get off your duff again.

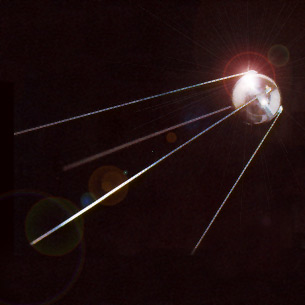

Sputnik (1957)

Like any massively successful project, the Internet has many fathers. But the earliest claim to paternity may belong to an 183-pound hunk of aluminum hurled into orbit on October 4, 1957. Sputnik not only launched the space race, it also started a technological cold war that led to the creation of the U.S. military’s Defense Advanced Research Projects Agency (DARPA, or ARPA).

“ARPA had a license to look for visionaries and wild ideas and sift them for viable schemes,” writes Howard Rheingold in his book The Virtual Community (Chapter Three is called “Visionaries and Convergences: The Accidental History of the Net”). “When [MIT professor J. C. R.] Licklider suggested that new ways of using computers … could improve the quality of research across the board by giving scientists and office workers better tools, he was hired to organize ARPA’s Information Processing Techniques Office.”

Licklider and his successors at ARPA sought out “unorthodox programming geniuses”–the hackers of their day. The result: ARPAnet, the precursor to today’s Internet. Without the space race, the Net might not yet exist. Other side benefits of that massive R&D infusion: advanced microprocessors, graphical interfaces, and memory foam mattresses.

Atari Pong (1972)

Blip … blip … blip …

Back in the early 1970s, no sane person would have predicted that swatting a small “ball” of pixels pinging between two rectangular “paddles” would spawn a $22 billion home gaming industry. Pong was not the first home video game system, but the massive popularity of its home version (introduced in 1976) lead to the first home PCs, as well as to our current console-game universe.

Yet Pong is far from just a museum piece. Last March, students at the Imperial College of London announced that they had developed a version of Pong that could be controlled using only your eyes.

A Webcam attached to a pair of glasses uses infrared light to track the movements of one eye; software translates that into the movement of a rectangular paddle on a screen. The idea is to make computer games more accessible to the disabled. Even today, Pong continues to be a game changer.

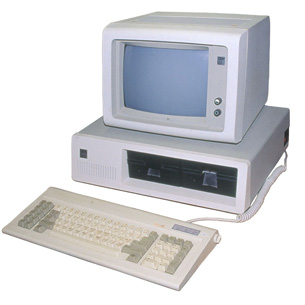

IBM PC Model 5150 (1981)

Before IBM unleashed the “PC” onto the world in August 1981, there were perhaps a dozen completely incompatible personal computers, all of which required their own software and peripherals. Shortly after August 1981 there were just three: the PC itself, the dozens of clone-makers doing their best to copy IBM’s open design, and those pesky Apple guys.

The IBM label turned personal computers from a toy for hobbyists and gamers into a business tool, while software like Lotus 1-2-3 and Wordstar gave businesses a reason to buy them.

The PC’s open architecture enabled software vendors and clone makers to standardize on one chipset and one operating system — driving down costs, making the PC ubiquitous, and completely changing the nature of work. Give credit to the Apple II (and VisiCalc) for proving personal computers were more than just playthings, but thank IBM for turning them into an industry.

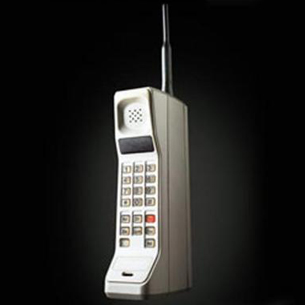

Motorola DynaTAC 8000X (1983)

More than a foot long, weighing more than 2 pounds, and with a price tag just shy of $4000, the DynaTAC 8000X would be unrecognizable as a cell phone to any of today’s iPhone-slinging hipsters.

Yet the shoebox-sized unit–approved by the FCC in 1983 and immortalized by Michael Douglas in 1987’s Wall Street–was the first commercially available cell phone that could operate untethered from an external power source.

It wasn’t until Motorola introduced the StarTAC in 1996 that cell phones began to adopt sleek form factors, and it took the Motorola Razr (2004) for phones to make a fashion statement. But neither would have existed without their older sibling. The DynaTAC helped change the nature of how and where we communicate.

IBM ThinkPad 700C (1992)

Though the first portable PCs appeared in the early 1980s (if you consider the 24-pound Osborne 1 “portable”), laptops didn’t really become corporate status symbols until IBM came out with its ThinkPad line in 1992.

Pretty soon you couldn’t show up in the executive lounge unless there was a bright red TrackPoint sprouting from the middle of your keyboard.

“In the large financial services organization I worked for, we had huge demand from the executives for the new IBM laptops,” recalls Brenda Kerton, principal consultant for Capability Insights Consulting. “To be seen as anyone in the business exec world, you just had to have one. They may not have had a clue how to manage e-mail or office software before the laptop, but they learned. And it snowballed from there. Laptops changed the conversations between IT and business executives.”

With the ThinkPad, technology suddenly went from being strictly for low-level geeks to something that was simply cool. And the business world has never been the same.

Broadband (1995)

The Internet, the World Wide Web, Amazon, Google, YouTube — all of them were game changers in their own way. But their impact wasn’t really felt until the bits flowed fast enough to let us access these things without losing our friggin’ minds.

Though U.S. businesses had been able to lease costly high-speed voice and data lines since the late 1960s, it wasn’t until 1995, when Canada’s Rogers Communications launched the first cable Internet service in North America, that consumers could enjoy the Web at speeds greater than 56 kbps (on a good day).

That was followed by the introduction of DSL in 1999. Now more than 80 million Americans access the Net at speeds averaging just under 4 megabits per second (according to Akamai), though we continue to trail many developed nations on both broadband penetration and speed.

The game really changed when we leapt to 1.5 megabits and beyond. Now 3G and 4G wireless broadband will change the game again.

The Slammer Worm (2003)

Though it’s hard to isolate a single culprit for the rise of malware, the Slammer/Sapphire Worm is an excellent candidate. In January 2003, this worm on steroids took down everything from network servers to bank ATMs to 911 response centers, causing more than $1 billion worth of damage in roughly 10 minutes. It marked the beginning of a new era in cyber security; after Slammer, the number and sophistication of malware attacks spiked.

In 2005, for example, German antivirus software test lab AV-Test received an average of 360 unique samples of malware each day, according to security researchers Maik Morgenstern and Hendrik Pilz [PDF]. In 2010, that number had grown to 50,000 a day–or nearly 20 million new viruses each year.

“I think the increasing number of new malware samples is one of the biggest ‘game changers’,” notes AV-Test cofounder Andreas Marx. “The malware is optimized for nondetection by certain AV products, and as soon as a signature is in place the malware will be replaced again.”

The other big change since Slammer: Malware is now the preferred tool of professional cyber criminals, not just random Net vandals.

Apple iTunes (2003)

Yes, the iPod was and is a groovy little gadget, and so are the iPhone and the iPad. But without the music store, the iPod was just another gizmo for playing CDs you’d ripped and tunes you’d illegally downloaded (you know who you are).

Without the video store, we might still be waiting for Hollywood to release its death grip on content. And without the App store, the iPhone would just be, well, a phone, and the iPad might not even exist.

Little wonder that more than 10 billion songs, 375 million TV shows, and 3 billion apps have been downloaded since the iTunes Store opened its doors in April 2003. Giving people an easy way to buy content makes them–duh–want to buy content. It’s something that entertainment industry execs apparently needed Steve Jobs to figure out for them.

WordPress (2004)

Web blogs have completely rewritten the rules of media. Pick any topic and you’ll find dozens, and possibly thousands, of blogs discussing it in mind-numbing detail. Now anyone can be a journalist and everyone’s an expert (at least in their own minds).

Though no single platform is responsible for the phenomenon, the open source WordPress (and its free blog hosting site, wordpress.org) is the big kahuna, particularly among the most popular blogs.

According to a July 2008 survey by the Pew Internet and American Life Project, one out of three Netizens reads blogs on a regular basis. They’ve become an essential part of any savvy company’s media campaigns. Companies like Google and Facebook use their official blogs to make major product announcements, while companies like Apple find themselves scooped by distinctly unofficial blogs. And for better or worse, blogs often drive the 24/7 news cycle. We have met the media, and it is us.

Capacitive Touchscreens (2006)

What made the iPhone different? It wasn’t the groovy geek in the black turtleneck; it was that magical capacitive touch screen, which uses your body’s own electrical properties to sense the location of your finger.

Patented in 1999 by Dr. Andrew Hsu of Synaptics, capacitive screens made their cell-phone debut on the LG Prada in 2006. But the iPhone catapulted the technology into the mainstream, leading to a new generation of apps that let you tap, swipe, stroke, and pinch your way to handset Nirvana.

“Apple didn’t invent the capacitive touchscreen, but it was the implementation of the technology in the original iPhone that completely altered the face of the smartphone market,” notes Ben Lang, senior editor of CarryPad, a Website focused on mobile Internet devices. “Apple realized that with touch input consistent enough for mainstream use and an intelligent soft keyboard, it could dedicate nearly the entire front of the phone to a screen. A ‘soft’ keyboard’ can be removed when it isn’t necessary, and make room for a rich and intuitive user interface.”

Now, of course, we expect every cool new mobile device to have this functionality. The multitouch screen made smart phones and their larger cousins like the iPad the “it” devices for the new millennium–no turtleneck required.

The Cloud (2010)

Vaporous? Possibly. Overhyped, most definitely. Still, the always-accessible Internet will change the game more than any of these other technologies combined. Why? Because the cloud will essentially turn the Net into a utility–just flip a switch or turn a spigot and it’s ready to use, says Peter Chang, CEO of Oxygen Cloud, a cloud-based collaboration and data storage vendor.

“Just as we use utilities like water and electricity instead of wells and generators, we leverage the utility of the cloud to store, access, and replicate data–whether it’s Flickr photos, YouTube videos, Facebook, Salesforce, Google Docs, or online games,” says Chang. “Cloud storage liberates users from the the confines of attached storage and empowers them to take their data anywhere.”

The Net began with a satellite shot into low-earth orbit. Now its future lies in the cloud. There’s something innately satisfying about that.

❮ ❯